I’ve mentioned previously how not having up to date statistics can cause problems in query performance. This post looks at something called the Ascending Key Problem which can badly affect your cardinality estimation in some cases and therefore your execution plans.

The Ascending Key Problem relates to the most recently inserted data in your table which is therefore also the data that may not have been sampled and included in the statistics histograms. This sort of issue is one of the reasons it can be critical to update your statistics more regularly than the built-in automatic thresholds.

We’ll look at the problem itself, but also some of the mitigations that you can take to deal with it within SQL Server.

The Problem

Imagine you have a table that stores a set of events. As new records are inserted they get stamped with the current date and time. You regularly query that table based on that EventDate looking to find recent events, let’s say just for the current day.

Even if you haven’t indexed the EventDate column (though why haven’t you?!), as long as you have AUTO CREATE STATISTICS and AUTO UPDATE STATISTICS on for your database you’ll have “reasonably” up to date statistics for that column.

But “reasonably” may not be good enough. When you’re querying against the most recent data it may not yet have been sampled by a statistics update and the range you are looking for may fall beyond the top of the histogram captured in the statistics object for EventDate. Imagine that statistics were last updated yesterday. When the Optimizer checks the statistics to estimate a rowcount for today’s date it finds that is above the top bound. So what should it guess?

Historically it would guess that there were zero rows, but as always the cardinality estimation gets set to the minimum of 1. If the real answer is a lot larger you might end up with a bad plan.

Let’s look at that in practice.

Staying true to the example above, I create a table called Events and I index the EventDate column:

CREATE TABLE dbo.Events

(

Id INT IDENTITY(1,1) CONSTRAINT PK_Events PRIMARY KEY CLUSTERED,

EventName VARCHAR(255) NOT NULL,

EventDate DATETIME CONSTRAINT DF_Events_EventDate DEFAULT (GETDATE())

);

CREATE INDEX IX_Events_EventDate ON dbo.Events(EventDate) include (EventName);

Then I insert records to represent events at one minute intervals for 100 days:

--Insert data for 100 days at minute intervals from the start of this year

DECLARE @StartDate DATETIME = '20170101 00:00.00';

INSERT INTO dbo.Events(EventName, EventDate)

SELECT

'Event' + CAST(num.n AS VARCHAR(10)),

DATEADD(minute,num.n,@StartDate)

FROM

(

SELECT TOP 144000 row_number() OVER(ORDER BY (SELECT NULL)) AS n

FROM sys.objects a, sys.objects b, sys.objects c

) num;

I’m going to query to check what date range was inserted. That should have the additional advantage of triggering a statistics update:

SELECT MIN(EventDate), MAX(EventDate)

FROM dbo.Events;

As a slight digression, it’s interesting to look at the execution plan here:

You can see two index scans. That sounds horrendous, scan the index twice to find the MIN and MAX? If you look at the properties though you can see it only read one row in each case:

An index scan doesn’t have to read all the records in the index, it can bail out once it is satisfied. For a MIN or MAX type query it makes perfect sense just to jump to one end of the index and start scanning.

The side lesson is that Scans aren’t always bad for performance.

Anyway, back to the topic in hand. Now let’s look at the statistics:

DBCC SHOW_STATISTICS('dbo.Events',IX_Events_EventDate);

You can see they’re up to date. They show 144,000 rows in total which is correct. Interestingly the Histogram (bottom result-set) only has a couple of steps. SQL has determined that the data is uniformly distributed so has bunched it altogether. Clever stuff!

Let’s insert data for another day:

--Insert one more day's data

DECLARE @StartDate DATETIME;

SELECT @StartDate = MAX(EventDate) FROM dbo.Events;

INSERT INTO dbo.Events(EventName, EventDate)

SELECT

'Event' + CAST(num.n AS VARCHAR(10)),

DATEADD(minute,num.n,@StartDate)

FROM

(

SELECT TOP 1440 row_number() OVER(ORDER BY (SELECT NULL)) AS n

FROM sys.objects a, sys.objects b, sys.objects c

) num;

Now I query to see the new events. I captured the MAX(EventDate) earlier so let’s use that to find the new records:

SELECT *

FROM dbo.Events

WHERE EventDate > '20170411'

OPTION (RECOMPILE);

(Notice I’ve added the option to recompile so I get a fresh plan each time I run this, that will be important for testing)

Let’s not bother with the results, we all know there will 1,440 records that are basically the same. Here’s the execution plan:

The interesting bit comes when I look at the properties for the Index Seek:

Estimated number of rows = 1, Actual = 1,440. That’s quite a long way out. Of course here we have a trivial query so the massive underestimate isn’t affecting our plan. If we started joining to other tables though it would likely result in a massively inefficient plan – perhaps choosing a Nested Loops join over a Hash or Merge.

Note I’m using SQL Server 2012 for this test and I’m not using the Traceflag (2371) which reduces the threshold for statistics updates ( When do Statistics Get Updated? ):

So I’ve got nearly another 30,000 rows to insert before statistics get automatically updated and my estimates come into line. If I’m always querying for the current day then it’s going to be very rare that statistics are going to be able to help me with a good estimate.

So what’s the fix?

Before we get on to the methods that have been introduced to try and ameliorate this problem, if you face this sort of scenario you might want to consider whether you need to update your statistics objects more often than the auto-stats threshold. If you have a regular job to rebuild fragmented indexes then those indexes that get rebuilt will have their statistics refreshed – however that won’t cover the auto created statistics, and it won’t cover statistics for tables that get don’t get rebuilt.

So, if you don’t have a specific scheduled job to regularly update statistics that is definitely worth considering.

In terms of how SQL has changed to help us, from SQL Server 2005 SP1, the nature of columns began to be tracked, monitoring updates of statistics to understand how the data changes. This additional information can be seen if you enable traceflag 2388, then view the statistics. Let’s have a look at what’s gathered. First I’m going to add a couple more days of data, updating the statistics between each insert, then I run the following:

DBCC TRACEON(2388);

DBCC SHOW_STATISTICS('dbo.Events',IX_Events_EventDate);

DBCC TRACEOFF(2388);

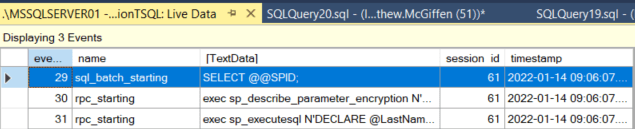

What you see here is historical information about the updates to the statistics. This is undocumented stuff, but some of what we see we can work out the meaning for. In particular we can see how many rows were inserted since the last statistics update, and how many of those values were above the top of the old histogram. We also see a column “Leading Column Type” which has a value of “Unknown”.

Now I’m going to insert another day’s date and update the statistics once more, then we’ll look at this again:

You can see that now we have a Leading Column Type of “Ascending”. After three updates to the statistics where the Leading Value was only increasing each time, SQL Server will identify that it is an ascending column. It must be at least three updates before SQL will recognise this, and if that stops being the case (i.e. some lower values are inserted) then the next statistics update will reset this until we again get three consecutive updates with only increasing values.

This happens in the background and you don’t need the traceflag 2388 to make it happen –that is just so you can see what is going on.

The obvious question is, now SQL knows my column is ascending, has that affected the estimation for my query? Before we look I’ll insert another day of data so there is some data beyond the histogram, and then I’ll query again:

SELECT *

FROM dbo.Events

WHERE EventDate > '20170415'

OPTION (RECOMPILE);

And the properties from the execution plan:

So nope. Nothing has changed.

Yet…

To tell the query optimizer to take advantage of this extra information for ascending keys we have traceflag 2389. Let’s enable that and run the query again:

DBCC TRACEON(2389);

SELECT *

FROM dbo.Events

WHERE EventDate > '20170415'

OPTION (RECOMPILE);

The properties:

Voila! SQL Server has now estimated my rowcount perfectly.

Now, be warned. This is a rather contrived example with a perfectly and artificially smooth distribution of data. The estimate is made by checking the current maximum value in the table, and using that combined with the information existing in the statistics and the value of your predicate to extrapolate a guess. If you’re data is evenly distributed as it is here then the guess will be pretty good, if it is fairly skewed then it may be bad.

In any case though it will probably be better that the fixed value of 1 that would have been used historically.

One thing to note is that traceflag 2389 is only going to have any affect if the leading column of the relevant statistics object has been marked as ascending. There is also traceflag 2390, and this will adopt a similar behaviour even if your column hasn’t been identified as ascending, i.e. it will check the maximum value in the table and if it is higher than the max value in the histogram, it will extrapolate to work out the cardinality estimate.

So should you turn on the traceflag(s) globally?

The Microsoft recommendation is not to enable traceflags such as these unless you are suffering from the specific problem they are aiming to resolve, and even then to make sure you test carefully to ensure they are achieving what you desire.

One issue can be that in more complex queries there are a number of cardinality estimates being made. It can be that two bad estimates within the same plan might cancel each other out and the query overall performs fine. If you then implement something that fixes one of them, you risk such queries going bad – a scenario known as plan regression.

This sort of scenario is one of the reasons why Microsoft have made very few core changes to the cardinality estimator since it came out.

So, use 2389 is you are specifically encountering this sort of ascending key problem, but also, if you are in the position to change the code then you might want to consider adding it as a query hint so it only affects the specific query you are targeting. For our example query above, that would simply look like:

SELECT *

FROM dbo.Events

WHERE EventDate > '20170415'

OPTION (RECOMPILE, QUERYTRACEON 2389);

Welcome to SQL Server 2014 (and later)

In 2014 we received a substantial revamp of the Cardinality Estimator, the first since SQL Server 7.0. A bunch of assumptions and algorithms have been re-jigged based on the real-world data that Microsoft have seen in supporting their wide customer base.

Key to having a new version was the concept that, henceforth, optimizer fixes would be tied to the database compatibility version. This means that customers can upgrade their SQL Server version but if they find performance problems related to the upgrade they can downgrade their database’s compatibility level while the issues are resolved within their codebase.

One of the items specifically looked at in the new version was this Ascending Key problem. To see how things work in the latest versions I’m going to repeat many of the steps above using a database deployed on SQL Server 2016.

So I:

- Create my table again

- Populate with the 100 days data

- Run a query to check the dates, which has the added benefit of updating statistics

- Add one more day’s data

Then I’m ready to run my test query again:

SELECT *

FROM dbo.Events

WHERE EventDate > '20170411'

OPTION (RECOMPILE);

I get the same execution plan as ever so again I jump to the properties of the Index Seek operator to look at the estimates:

Now, this is interesting. I might have expected I would get either 1 row estimated (the old model) or 1,440 (the model with traceflag 2389). Instead I get 432 rows. It seems the new CE (Cardinality Estimator) uses a different algorithm.

Sometimes numbers stick in your head. I happen to know that where no statistics are available and you are querying with an inequality predicate (<, > , <=, >=) that the CE will estimate the number of rows to be 30% of the total number of rows in the table. This assumption seems to have originated in a 1979 research paper from IBM suggesting 1/3 was a good guess.

With 30% in my head I noticed that 432 is 30% of 1440. So it seems that the optimizer is recognising that we are querying for values above the histogram (where no statistics exist) with an inequality, it knows from somewhere that there have been 1440 rows inserted since the last statistics update, so it takes 30% of 1440 to produce the estimate (432).

To try validate that theory I thought I’d query with a later datetime in the predicate. Sure enough, if I add 12 hours I still get 432 rows estimated. If I add 23 hours, 432 rows. In fact if I query for any date in the future, even outside of the maximum value in the table, guess what – I get an estimate of 432 rows.

I have a fascination for the algorithms involved in distribution statistics. It satisfies the maths geek in me. As such it’s difficult to end a post like this, there’s always more things to test, to try and work out. For instance what happens if you query across an interval that starts within the current histogram, but then extends above it? I’ll admit I’ve had a play, but will leave that for another post.

As a very final point in this post, I thought I’d just check whether the 2389 traceflag makes any difference to this estimation with the 2014 CE. I’ll change my query to look way into the future, enable the traceflag and look at the estimate:

SELECT *

FROM dbo.Events

WHERE EventDate > '99991231 23:59:59'

OPTION (RECOMPILE, QUERYTRACEON 2389);

Guess what? Still 432 rows… so no, the traceflag doesn’t appear to still give us any benefit.

Though when we get to that date it’ll be someone else’s problem to sort out!

Got a problem or embarking on a SQL Server project and want some help and advice? I’m available for consulting – please get in touch or check out my services page to find out what I can do for you.